Encoding and annotating medieval sources

A Zoom colloquium supported by the Research Group for Medieval Philology, 3 December 2020

Hovedinnhold

In the autumn of 2020, young students and scholars were invited to give talks at this colloquium. The result was 11 talks from a wide variety of perspectives, although all focused on medieval sources. In addition, a number of other scholars participated in the discussion, which turned out to be lively and highly informative. All in all, over 40 scholars from ten different countries attended the colloquium.

The colloquium was organised by Odd Einar Haugen and Juliane Tiemann, members of the Research Group for Medieval Philology at the University of Bergen. It was held during the second wave of the covid-19 pandemic, so almost everybody participarted by Zoom from their own homes (and in a few cases, offices). This was a new situation for most of us, but it went surprisingly well.

On this retrospective page, we keep the original programme (which indeed was followed) and below we have added the shared paper presentations we have received.

We hope that the colloquium has helped establish new contacts among the participating scholars, and we were also happy to welcome bachelor students, master students, PhD students as well as early (and not so early) career researchers. This was a wider audience than we had hoped for, but so much the better.

PDF version of the programme with abstracts for all talks

Programme

09:00–09:20 Odd Einar Haugen and Juliane Tiemann: Welcome and introduction to the colloquium

09:20–09:50 || Sven Kraus, Basel

Menota as a basis for hyper-annotated editions. Experiments with Pamphilus saga

Pamphilus saga was translated from Medieval Latin to Old Norse in the middle of the 13th century. The Latin original is full of material taken from classical Latin poets like Ovid and Virgil. In the current stage of my PhD project, I am looking into how these intertextual references were translated into Old Norse. This is one of three stages to understanding the position of Pamphilus saga in the Old Norse literary polysystem. The intertextual connections and literary strands which make up the polysystem can be better understood as a network, which can be analyzed using methods of network analysis. All textual data and metadata is stored in a graph database, the textual data is taken from Menota and supplemented from other sources such as ONP and emroon where applicable. In the current phase the Latin and Old Norse texts are being parallelized, which will allow for a transparent mapping of the Latin intertextual references onto the Old Norse translation. The way the data is modelled will not only facilitate the analysis but may also form the basis of a highly enriched way of displaying intertextual networks and reading texts in a networked understanding of literary production. The presentation will focus on the advantages this type of text display may have to offer, the technical processes and challenges going into making the database work, and how Menota XML editions can form the basis for this type of textual work.

09:50–10:20 || Paola Peratello, Verona

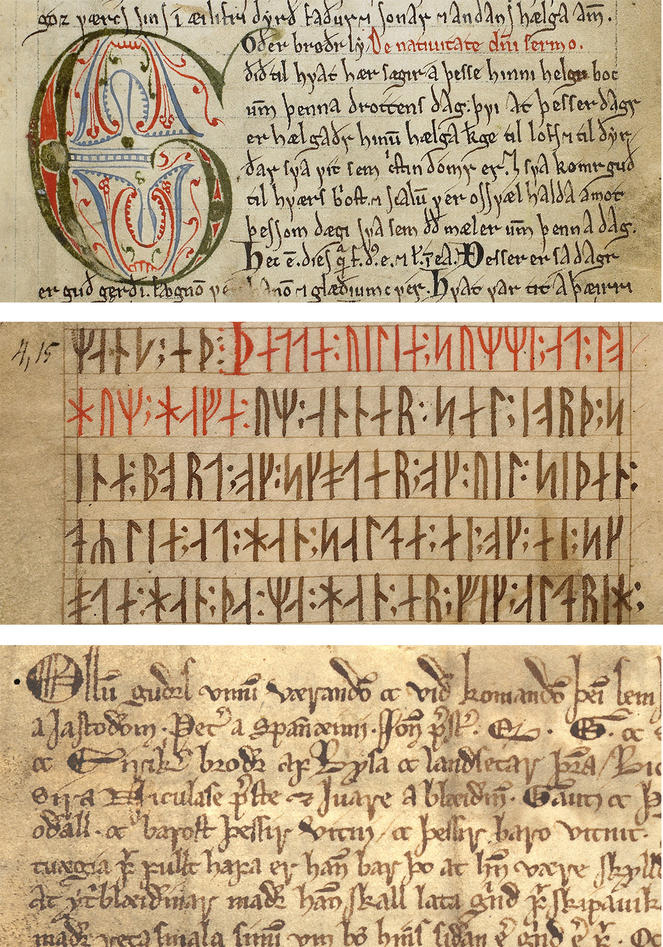

Encoding scribal and editorial interventions in AM 28 8vo (Codex Runicus)

Additions, insertions, deletions, corrections are only some examples of the variety of the scribal and editorial interventions which can be found in any manuscript texts. Within the process of creation of a digital edition, the encoding of these features is a fundamental step. The topic of this presentation is indeed to show how scribal and editorial interventions in fols. 1r-3r from Codex Runicus, AM 28 8vo (ca. 1300), are encoded. This work is part of the research project of encoding Codex Runicus, a danish medieval manuscript entirely written in medieval runes. Nevertheless, also Latin alphabet letters are found in the margins of or within the text.

The digital edition of AM 28 8vo will be published in the Menota Public Catalogue (https://clarino.uib.no/menota/catalogue): for this aim, scribal and editorial interventions will be encoded according to the guidelines explained in detail in chapters 8 and 9 of The Menota handbook v. 3.0, XML-TEI P5 compliant. For instance, the element <add> is used for characters (i.e. runes or Latin alphabet letters), words or phrases added in the margins or within the text by the scribes theirselves or later hands.

This presentation will illustrate how specific textual layers (i.e. type of intervention, when and by whom it is made) of the selected section fols. 1r-3r of Codex Runicus are encoded and visualized.

10:20–10:30 Virtual tea & coffee break

10:30–11:00 || Tonje H. Waldersnes, Bergen

Encoding of 14th and 15th century diplomas

As the digital age progresses the want for more knowledge and the wish to make it accessible has created a new branch of tools among philologists, the XML encoding. Transcriptions of medieval codices, fragments and documents that have been collected in archives and libraries, can now be read and researched at home through the computer. The knowledge on making this type of transcriptions has been limited to the few interested scholars and therefore left a gap between those who are familiar with encoding, and those who are not. To close this gap several scholars have worked on simplifying the process of XML encoding aimed at digital archives such as Menota.

In this presentation, I am going to try to convey the experience of using MenotaBlitz and Menota from the point of view of a student with limited knowledge of encoding, and new to the world of digital transcription. I will show this by first going through my own try at digital transcription of a Norwegian diploma from the 14th century, done as an assignment during a class on diplomas at University of Bergen. I will then show the additional information that can be stored in such a file, and lastly how this experience and collective work can be applied to my future project concerning 14th and 15th century diplomas in my master thesis.

11:00–11:30 || Valerio N. Rossetti, Bergen

Encoding of fragments of law manuscripts

This presentation will allow people to see the three main problems facing someone who has to encode law fragments using first the Menotablitz system and then the full Menota encoding with three levels of textual representation, the facsimile, the diplomatic and the normalized text.

First a short overview of the system created by Robert K. Paulsen will be done, in which I will show the procedure used and its main characteristics. Since I do not work on lemmatization, this presentation will mainly focus on the encoding of the facsimile and diplomatic version of the fragments

Afterwards, when I have finished encoding via Menotablitz, the work on the normalized level will be shown, where I will present the issues that, at this stage, are strongly connected to the fragments themselves and the challenges these fragments have brought me.

These issues are generally those that will be seen in more detail in the last part of my talk, in which I discuss the encoding problems of each fragment: NRA norr fragm 3, NRA norr fragm 5, NRA norr fragm 6 and NRA norr fragm 13.

Finally, I will talk on the use of the encoding for my own master research.

11:30–12:00 || Robert K. Paulsen, Bergen

Menota via emroon. A detour through the land of referentiality

One of the tasks facing digital editors of Old Norse primary source material is the transcription of the text itself. Another task is the processing of the text in order to create various focal levels of text representation as well as to add further information to each token.

My presentation will pick up where the previous talks left off: once a transcription has been created following the MenotaBliX.xsd standard, one can use the menotaBlitz.plx Perl script in order to produce XML files following various TEI-based encoding schemes. The scheme employed by the Menota archive (www.menota.org) allows for three levels of text representation (facsimile, diplomatic, normalized) as well as for the lemmatization and morphological analysis of each token (among other things).

One way towards this kind of digital edition is through a detour to the land of referentiality: After using the Perl script to produce an XML file following the emroon/TEI standard and annotating it with the appropriate referential codes (pointing to a full-form lexicon) for each token, one can view the manuscript material on the emroon database’s website (www.emroon.no) on all three levels and search it based on morphological, orthographical and etymological parameters. Furthermore, an export function allows the user to download full-blown Menota-ready XML files.

In my presentation, I will describe the workflow involved in this edition process and show how data is saved and generated following both the emroon and the Menota framework.

12:00–13:00 Virtual lunch break

13:00–13:30 || Nick Pouls, Bergen

Preparing the Parchment Page: Encoding Pricking and Ruling of Medieval Manuscripts for Quantitative Studies

The relationship between the pricking and ruling is of vital importance to understand the stratigraphic layers of a manuscript page. Manuscript scholars that are interested in the pricking and ruling of manuscripts will notice two aspects in present scholarship: i) most catalogues provide - if discussed at all - only brief remarks concerning the pricking and ruling of manuscripts; and ii) developed encoding systems are seldomly applied into manuscript research. This paper aims at combining different existing, although less applied, encoding systems for different pricking and ruling types to show its potential for quantitative studies.

Much attention is given to the encoding of pricking and ruling types in previous scholarship. The types of pricking are extensively discussed by Leslie Webber Jones, presenting a classification concerning their shape and arrangement. Their shape can be classify into encodings of A to L, depending on the roundness, angle, and vertical or horizontal position. In addition, Jones argues that the arrangement of pricking as inner, outer, or combined systems need to be considered alongside the shape or the prickings. Different systems for encoding ruling types are developed by Albert Derolez, Jacques-Hubert Sautel, and Denis Muzerelle. Although Derolez prefers to group different ruling types, Sautel and Muzerelle present encoded formula, based on the vertical, horizontal, marginal and extensional lines. In addition to their excellent scholarship, this paper proposes a combined encoding system of the pricking and ruling as discussed specially by Jones and Muzerelle. Through a quantitative encoding of the pricking and ruling of twelfth-century manuscripts of Benedictine and Cistercian monastic institutions in the diocese of Liège and Tournai, this paper will show the advantages for statistic research.

13:30–14:00 || Þorsteinn Vilhjálmsson, Reykjavík

Encoding Latin, Ancient Greek and Old Norse – differences, similarities and problematics

The PROIEL database (developed by Dag T.T. Haug and colleagues in Oslo) features annotated texts in many languages, among them Greek, Latin and Old Norse. While all are encoded using one system of annotation, there are inevitable differences, depending on the language, between the methods of annotation, the character of common annotation errors, and the filters or screens between the texts, their interpretation and their annotation. This talk will consider these differences, contrast them and analyse them briefly, inviting discussion on the deeper issues of the textual annotation of ancient texts.

PROIEL database (ask for password): http://foni.uio.no/proiel

PROIEL at github: https://github.com/proiel

14:00–14:10 Virtual tea & coffee break

14:10–14:40 || Fartein Th. Øverland, Cluj-Napoca

Syntactic annotation of skaldic poetry with dependency analysis

The Old Norse language is characterised by relatively free word order, in prose as well as in poetry. In skaldic poetry, particularly in the dróttkvǽtt style, this possibility was taken to the extreme. From the perspective of any syntactic model in which word order is a variable, many skaldic stanzas will probably have to be considered ungrammatical. There is, however, a longstanding tradition of transforming the skaldic word order into that of ordinary prose in order to make their interpretation easier and to indicate the proposed reading. This suggest that the underlying syntactic structure of skaldic poetry is actually more similar to prose syntax than it would appear on the surface. Dependency analysis is a syntactic model which is not based on word order. It is therefore a suitable model and tool for annotating skaldic poetry in order to uncover these underlying structures. This presentation will reflect on the experience of annotating skaldic verse utilizing the dependency analysis of the Menotec project (https://www.menota.org/menotec.xml).

14:40–15:10 || Alexander Pfaff, Oslo

Annotating noun phrases in Old Icelandic – The NPEGL database

One task of the NFR-funded project “Constraints on syntactic variation: Noun Phrases in Early Germanic Languages” has been to create a database (–> NPEGL) comprising material from Old Icelandic, Old English, Old Saxon, Old High German (and Gothic). NPEGL is a highly specialized database; it is not text-based, but every entry is, by definition, a noun phrase.

In this talk, I will first outline and discuss the individual components of NPEGL: (i) the basic categories and the (sub-)categorization system, (ii) segmentation and syntactic parsing, (iii) formal and (iv) semantic properties and the feature system involved. This will be illustrated by some actual examples and real-time annotation.

As for (iv), NPEGL provides a sortiment of features dedicated to nominal, adjectival and genitival semantics. While formal properties are relatively straightforwardly operationalizable and the respective feature annotation is not very problematic, these semantic properties do constitute a challenge. Based on Old Icelandic examples, I will discuss and illustrate a number of such challenges.

15:10–15:20 Virtual tea & coffee break

15:20–15:50 || Omar Khalaf, Padova, and Raffaele Cioffi, Torino

Editing the Italian way: A scholarly digital edition of Naples, Biblioteca Nazionale MS XIII.B.29

This paper will illustrate the project of a scholarly digital edition of Naples, National Library MS XIII.B.29.

Dated back to the fifteenth century, this manuscript contains miscellaneous texts in prose and verse; Moreover, quite interestingly, it is the only Middle English manuscript extant in Italy. Although nothing is known about its origins and the reason for its presence in Italy, a number of inscriptions and notes in sixteenth-seventeenth century Italian witness certain local interest, if not in the texts, at least in the codex per se. In particular, an illustration and a colophon suggest that it sometime fell in the hands of the philosopher Tommaso Campanella, a protagonist of the Neapolitan cultural milieu of that time. Little interest has been shown for the texts contained in the codex, which have seldom been edited separately. On the other hand, in a neophilological perspective this manuscript assumes great relevance as both a physical artefact with its own history and a witness of the mouvance of each text within its own tradition.

The main purpose of the project is to create an image-based, double-layered (diplomatic and interpretative) edition that might enable scholars and students to fully appreciate the physical and textual features of the codex. This paper aims at providing a preliminary TEI mark-up schema of the texts and of the paratextual elements and at proposing a preliminary plan for the scholarly edition, which will be realised through EVT (Edition Visualization Technology), an Italian open-source tool that has already been successfully employed in other similar project such as, among others, the Vercelli Book (http://vbd.humnet.unipi.it/) and the Codice Pelavicino Lombardo (https://pelavicino.labcd.unipi.it/).

15:50–16:20 || Alessandro Palumbo and Federico Aurora, Oslo

ENCODE: Bridging the <gap> in Ancient Writing Cultures: ENhance COmpetences in the Digital Era

This paper presents a newly started Erasmus+ project, whose overarching aim is to promote the use of digital methods in the preservation and study of pre-modern written texts. Traditional disciplines in the humanities like philology, palaeography and epigraphy are increasingly embracing digital change, developing and using new tools and methods to approach their objects of study. However, these innovations require new competences and training both for students and researchers in the rapidly evolving field of Digital Humanities and AI. The ambition of the ENCODE project is to establish a collaborative and shared platform for the teaching and learning of these digital competences. To reach this goal, a partnership has been established between six universities (Bologna, Parma, Würzburg, Leuven, Hamburg, Oslo), that will organize a series of events, conferences and training sessions during the period 2020-2023, which will also be open to participants from institutions outside of the six project partners. The project and the events planned will lead to three main outcomes:

1) The surveying of existing competences and practices in the teaching of digital methods applied to the study of pre-modern texts.

2) The formulation of a shared definition of digital competencies needed by academic staff and students involved in programmes focusing on written cultural heritage.

3) The design and testing of teaching modules, both basic and advanced, paired with guidelines and examples of best practices for their employment at other universities, making the modules customizable and transferable.

16:20 Final discussion and concluding remarks

Shared paper presentations

Sven Kraus, Basel - Menota as a basis for hyper-annotated editions. Experiments with Pamphilus saga

Paola Peratello, Verona - Encoding scribal and editorial interventions in AM 28 8vo (Codex Runicus)

Tonje H. Waldersnes, Bergen - Encoding of 14th and 15th century diplomas

Valerio N. Rossetti, Bergen - Encoding of fragments of law manuscripts

Robert K. Paulsen, Bergen - Menota via emroon. A detour through the land of referentiality

Fartein Th. Øverland, Cluj-Napoca - Syntactic annotation of skaldic poetry with dependency analysis