Stochastic Cluster Embedding

Main content

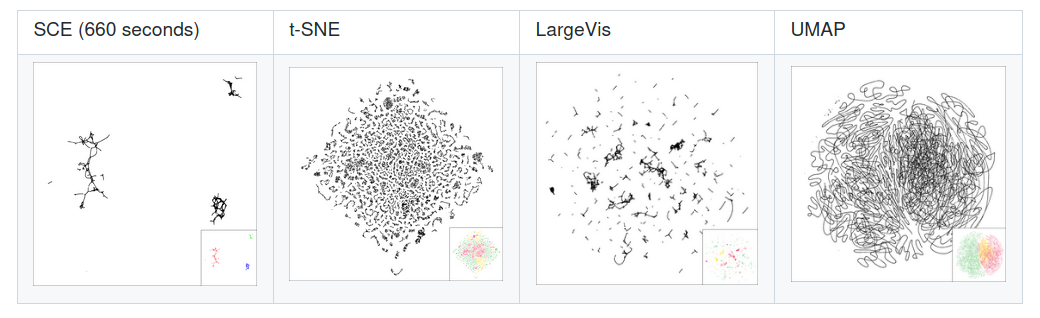

Abstract: Neighbor Embedding (NE) that aims to preserve pairwise similarities between data items has been shown to yield an effective principle for data visualization. However, even the currently best NE methods such as Stochastic Neighbor Embedding (SNE) may leave large-scale patterns such as clusters hidden despite of strong signals being present in the data. To address this, we propose a new cluster visualization method based on Neighbor Embedding. We first present a family of Neighbor Embedding methods which generalizes SNE by using non-normalized Kullback-Leibler divergence with a scale parameter. In this family, much better cluster visualizations often appear with a parameter value different from the one corresponding to SNE. We also develop an efficient software which employs asynchronous stochastic block coordinate descent to optimize the new family of objective functions. The experimental results demonstrate that our method consistently and substantially improves visualization of data clusters compared with the state-of-the-art NE approaches.

Speaker: Zhirong Yang received his Doctoral degree from Helsinki University of Technology, Finland in 2008. He is working as a professor in Norwegian Open AI Lab and Department of Computer Science at the Norwegian University of Science and Technology. He also works as IEEE Senior Member and leads the project "ShuttleNet: Scalable Neural Models for Long Sequential Data" (NFR 2018–2023). His current research interests include data visualization, cluster analysis, learning representations for structured data.