Preparation and imaging of life science serial sections

Main content

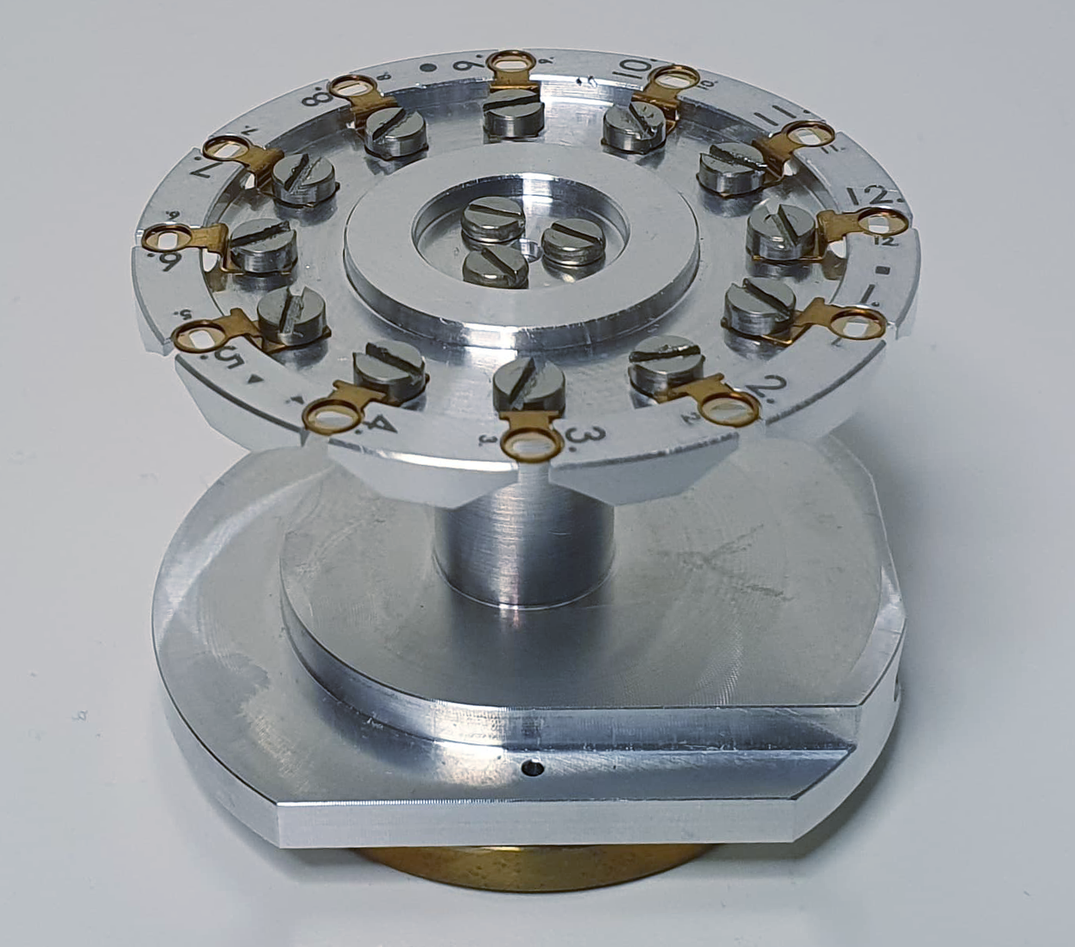

Ultrathin sections of life science samples can be imaged in different ways in a scanning electron microscope. True transmission mode imaging of sections mounted on standard TEM grids is possible by using a STEM detector. This imaging mode provides the highest possible resolution (< 1nm) sufficient for identifying the bi-layered structure of biomembranes. 12 grids as they are common for transmission electron microscopy can be inserted in the sample holders available at ELMILAB and examined and automatically imaged without the need for sample exchanges.

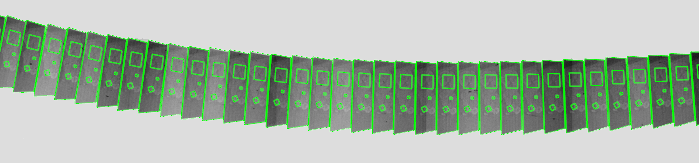

Little less resolution, but even higher throughput is possible if the sections are mounted on conductive solid substrates like coated glass slides or silicon wafers and imaged with backscatter or in-lens secondary detectors. One slide can carry hundreds of sections that are firmly attached to a perfectly flat surface which is optimal for automated imaging. This imaging approach (array tomography) is popular for generating large high-resolution 3D atlases of cells, tissues, or even whole small organisms. Compared to other 3D EM approaches, where the sample is sectioned inside the microscope, array tomography requires user-driven physical sectioning and is computational more demanding, but the samples remain, can be reimaged at higher resolutions, and screened in intervals before certain regions are chosen for 3D imaging.

Generating large 3D data sets is only meaningful if sample fixation, embedding, and staining have been optimized in the forehand. ELMILAB offers microwave-assisted sample preparation cutting down the sample preparation time considerably and allowing for efficient parameter optimization. The ultramicroscopes available at ELMILAB have custom add-ons for the preparation of artifact-free serial sections.

ELMILAB offers the application of image processing routines that turn raw 3D data sets into smooth image stacks suitable for automated downstream analyses like feature recognition and segmentation. On request, we deliver big data in chunked hierarchical data formats. This way even terabyte data sets can be efficiently screened and analyzed with standard computer hardware.